Essential Kafka Fundamentals

- Published on

- Authors

- Author

- Ram Simran G

- twitter @rgarimella0124

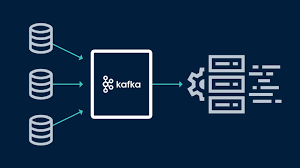

Inspired by a insightful tweet highlighting core Kafka ideas that stand the test of time, this blog post dives into 16 fundamental concepts every Kafka user—from developers to architects—should master. These aren’t fleeting trends; they’re the bedrock of Kafka’s reliability, scalability, and performance. For each, I’ll break it down into a clear explanation and a real-world use case, keeping things balanced and actionable. Whether you’re building event-driven systems or troubleshooting production clusters, understanding these will save you headaches and unlock Kafka’s full potential.

1. Topic vs. Topic-Partition

Explanation: A topic in Kafka is a logical category for organizing streams of related records, like a named feed of events. Partitions, on the other hand, are the physical units of parallelism within a topic—each topic is divided into one or more partitions, which are ordered, immutable sequences of records stored on disk. Partitions enable scalability by allowing multiple producers and consumers to work concurrently, with records distributed based on a hash of the key (or round-robin if no key).

Real-World Use Case: In an e-commerce platform, a “orders” topic might have 12 partitions to match the number of order-processing servers. This setup lets producers submit orders in parallel, reducing latency during Black Friday surges, while consumers (like inventory updaters) process partitions independently for even load distribution.

2. Partition vs. Partition-Replica

Explanation: A partition is the core data unit in Kafka, representing a single log of records appended sequentially. A partition-replica is a copy of that partition stored on a different broker for fault tolerance—each partition has a leader (handling reads/writes) and followers (replicating data). Replication factor determines how many replicas exist, ensuring data durability even if brokers fail.

Real-World Use Case: For a financial trading system logging transactions, setting a replication factor of 3 means each partition has two replicas. If a broker crashes mid-trade peak, the leader failover to a replica keeps trades flowing without data loss, maintaining compliance with zero-downtime SLAs.

3. Difference Between acks=1 and acks=all

Explanation: The acks producer config controls acknowledgment requirements before considering a write successful. acks=1 waits only for the leader to confirm receipt (fast but risky, as replicas might lag). acks=all (or acks=-1) requires the leader and all in-sync replicas (ISR) to acknowledge, prioritizing durability over speed but increasing latency.

Real-World Use Case: In a social media feed generator, acks=1 suits high-throughput “likes” events where occasional duplicates are tolerable. For user profile updates, acks=all ensures no lost data, preventing profile inconsistencies that could frustrate users during viral campaigns.

4. min.insync.replicas

Explanation: This broker config sets the minimum number of in-sync replicas (ISR) required for a write to be considered successful when acks=all. If fewer ISRs are available (e.g., due to failures), writes fail to enforce durability. It balances availability and consistency—higher values mean stricter checks but potential unavailability.

Real-World Use Case: In healthcare IoT systems tracking patient vitals, setting min.insync.replicas=2 with three replicas ensures at least two brokers confirm critical alerts. During a network glitch knocking out one broker, it prevents false positives from unconfirmed data, safeguarding patient safety without halting monitoring.

5. Producer Idempotence

Explanation: Enabled via enable.idempotence=true, this feature assigns producers a unique PID and sequence numbers to messages, allowing brokers to detect and deduplicate retries automatically. It prevents duplicates from network retries without app-level logic, providing at-least-once semantics with exactly-once effortlessness for single partitions.

Real-World Use Case: For inventory management in retail, idempotent producers retry stock deductions during flaky connections without over-deducting items. This avoids phantom shortages during restocks, ensuring accurate shelf counts and smooth customer experiences in omnichannel sales.

6. Exactly Once Guarantees and Transactions

Explanation: Kafka’s exactly-once semantics (EOS) use idempotence plus transactions to atomically produce to multiple topics or partitions. Transactions group messages with begin/commit/abort, ensuring all-or-nothing delivery. This extends idempotence cluster-wide, ideal for cross-topic consistency.

Real-World Use Case: In banking apps, a transaction spans “debit” and “credit” topics: EOS ensures both succeed or neither, preventing unbalanced ledgers. During peak tax season, this avoids erroneous transfers, maintaining audit trails and regulatory compliance without manual rollbacks.

7. Fetch Isolation Levels: Read Committed vs. Read Uncommitted

Explanation: isolation.level=read_uncommitted (default) lets consumers read any replica’s data, including uncommitted messages (fast, but possible to see ghosts). read_committed only reads up to the last stable offset (LSO), guaranteeing no uncommitted data but slightly slower, enforced via EOS.

Real-World Use Case: Analytics pipelines use read_uncommitted for real-time dashboards, tolerating brief inconsistencies for speed. Fraud detection opts for read_committed to avoid acting on aborted suspicious transactions, reducing false alerts in high-stakes financial monitoring.

8. The KIP-848 Consumer Group Protocol

Explanation: Introduced in Kafka 4.0, KIP-848 overhauls rebalancing with a declarative, reconciliation-based protocol. Instead of stop-the-world assignments, it uses broker-side loops to incrementally adjust partitions, enabling up to 20x faster rebalances and supporting dynamic scaling without full pauses.

Real-World Use Case: Video streaming services with elastic consumer groups use KIP-848 to rebalance during auto-scaling events, like viewer spikes. This minimizes playback interruptions, keeping seamless delivery for millions without the lag of traditional protocols during global events.

9. KIP-405 Tiered Storage

Explanation: KIP-405 adds a remote storage tier alongside local disks, offloading older segments to cheaper cloud/object storage while keeping hot data local. It enables infinite retention without exploding broker costs, with automatic prefetching for seamless access.

Real-World Use Case: Compliance-heavy industries like logging for audits use tiered storage to retain years of data affordably. A telecom firm stores call records locally for 7 days (queries) and tiers the rest to S3, slashing costs while querying historical fraud patterns instantly.

10. KIP-392 Fetch From Follower

Explanation: KIP-392 lets consumers fetch from the closest replica (follower) instead of always the leader, reducing latency and cross-region data transfer. Brokers route fetches intelligently, with safeguards for offset consistency, optimizing for geo-distributed clusters.

Real-World Use Case: Global gaming platforms deploy multi-region Kafka; followers in each zone serve local players, cutting egress fees and ping times. During cross-server raids, this ensures sub-50ms event delivery, enhancing immersion without central bottlenecks.

11. Producer linger.ms and batch.size Settings

Explanation: linger.ms delays sends to batch messages (default 0 for immediate), while batch.size caps batch payload (default 16KB). Together, they trade latency for throughput—higher values amortize overhead but risk delays, tunable per topic for optimization.

Real-World Use Case: IoT sensor networks set linger.ms=5 and larger batch.size to bundle telemetry, reducing network chatter. In smart factories, this aggregates machine data efficiently, lowering bandwidth costs and enabling real-time anomaly detection without overwhelming edges.

12. Unclean Leader Election

Explanation: When a leader fails and no ISR remains, unclean.leader.election.enable=true allows out-of-sync replicas to become leaders, prioritizing availability over consistency (risking data loss). Disabled, it halts the partition until recovery.

Real-World Use Case: High-availability chat apps enable it for partitions with low replication, accepting rare gaps to keep messaging alive. During outages, users continue conversations seamlessly, with post-mortem reconciliation fixing minor losses in non-critical threads.

13. Partition Reassignments

Explanation: Reassignments manually or automatically move partitions between brokers for balancing load, adding/removing brokers, or minimizing data movement. Tools like kafka-reassign-partitions handle it, with throttling to avoid overload during live clusters.

Real-World Use Case: Cloud auto-scaling e-commerce clusters reassign partitions when adding nodes for holiday traffic. This evens CPU usage across brokers, preventing hotspots that could delay order confirmations, ensuring scalable growth without downtime.

14. Difference Between Key-Based Log Compaction vs. Time/Size Based Log Retention

Explanation: Log compaction (keyed) retains the latest value per key indefinitely, deleting older ones for “tombstone” cleanup—great for stateful streams. Retention by time/size deletes segments after thresholds, focusing on bounded storage for transient logs.

Real-World Use Case: User session stores use compaction to keep current profiles (latest login per user), discarding history. Analytics use time-based retention for 30-day event logs, optimizing disk for queries while complying with GDPR data expiry in marketing campaigns.

15. High Watermark Offset / Last Stable Offset

Explanation: The high watermark (HW) is the offset up to which all ISRs have replicated, defining committed data for consumers. The last stable offset (LSO) is the earliest offset where all prior messages in a transaction are committed, used in read-committed mode for EOS safety.

Real-World Use Case: Supply chain trackers rely on HW for durable shipment updates across replicas. In EOS-enabled inventory syncs, LSO prevents reading partial batches, ensuring atomic stock levels during multi-warehouse transfers without overcommitments.

16. Dynamic Configurations

Explanation: Kafka supports runtime config changes via JMX or APIs, like altering replication factors or retention without restarts. Per-broker, topic, or client overrides propagate via ZooKeeper/ KRaft, enabling agile tuning in production.

Real-World Use Case: A/B testing platforms dynamically tweak topic retention for experiment data, shortening it post-analysis. During A/B rollouts, this adjusts min.insync.replicas on-the-fly for traffic spikes, minimizing ops interventions and accelerating iterations.

Cheers,

Sim